The tasks described in the use case section are implemented as follows: STOCK_S3_BASE_PATH: Pathway to staging S3 bucket/folder where data would be stored.SNOWFLAKE_CONN_ID: Connection ID referencing a connection made in the Airflow UI to my Snowflake instance.TickerList: List of different stock tickers to gather data for.Relevant constants are defined in the top-level code and used throughout the DAG:

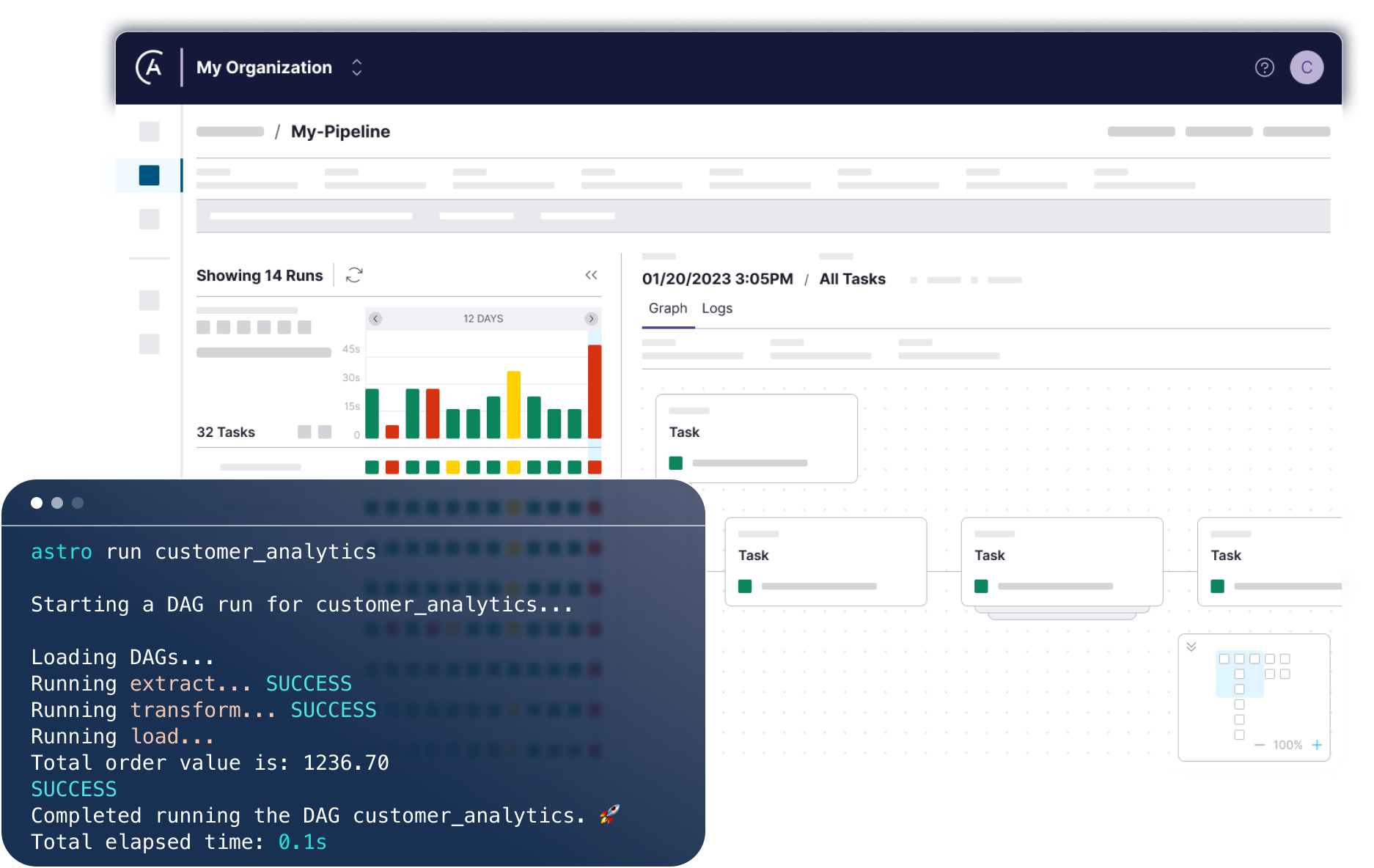

The code for my stock ETL pipeline looks like this: In Airflow, you define your data pipeline (DAG) as Python code. Then, I ran “astro dev start” to build and run a dockerized local Airflow environment.Īfter that, I fired up VSCode and started building my DAG. First, I created an empty directory called “DBricksCompare” and then ran “astro dev init” to create a file structure as seen below:Īfter doing so, I created a new Python file in the dags folder called StockData.py, which is where I started building my DAG. To get started with a fresh Airflow environment, I downloaded and used the Astro CLI to create a local Airflow environment using Docker Desktop. Load Stock Data: Transfers the data from S3 to a Snowflake table.Store Stock Data: Uploads the transformed data to an S3 bucket.Transform Stock Data: Performs transformations on the cleaned data to identify the highest stock price for each month.Clean Stock Data: Cleans the loaded stock data by selecting specific columns, including price, stock ticker, volume, and date.Extract Stock Data: Fetches stock data for a given ticker from a public URL and loads it into a pandas DataFrame.While I’ll be using different methods to accomplish each of these tasks, the core function of each task within each pipeline will remain the same. Finally, I’ll load those results into an S3 staging bucket before transferring them into a production Snowflake database. I will clean it by selecting only the needed columns and then I’ll transform it to find the highest prices per month for each stock in the past year. Integrates with 100’s of providers of various servicesįor this example, I will collect stock price data on three companies from an API endpoint. Integrates with Cloud Object Stores and ODBC/JDBC

Limited best suited for orchestrating tasks rather than performing heavy data processing High-performance data processing, optimized for large-scale operations Must connect to an external Spark cluster to execute Spark jobsīasic workflow execution leveraging 3rd party tools for scheduling and complex task dependencies.ĭynamic workflow creation, task dependencies, and advanced scheduling with branching, retry, etc. Natively runs Spark jobs at high efficiency

No connection management system, must set them manually and configure Spark profile to interact with them within each notebook/task that uses themĬreate connections in the Airflow UI or programmatically that can be used across environment, use them by referencing connection objects Manage dependencies at the environment level, import necessary libraries once at DAG level Must manage dependencies for each task individually, no environment dependency management system I’ll include the setup process, building out the ETL pipeline, and pipeline execution, to show you what the developer experience is like working with these two tools.įinally, I’ll explore where Airflow and Databricks can be used together, and how the sum of these two platforms can be even greater than their parts! TL DR: Ease of Use Comparison Chart Categoryīig data analytics and processing using optimized Apache Spark To help other data engineers out there who are deciding between the two tools, in this blog post I’ll explore how to implement a common ETL use case on each platform. While there is much talk online of using Databricks OR Airflow for ETL workflows, these two platforms are very different in how they function and for which use cases they are best suited for. Apache Airflow is an open-source platform designed to programmatically author, schedule, and monitor workflows of any kind, orchestrating the many tools of the modern data stack to work together. Databricks, founded by the creators of Apache Spark, offers a unified platform for users to build, run, and manage Spark workflows. Databricks and Airflow are two influential tools in the world of big data and workflow management.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed